Description

In this course, you will :

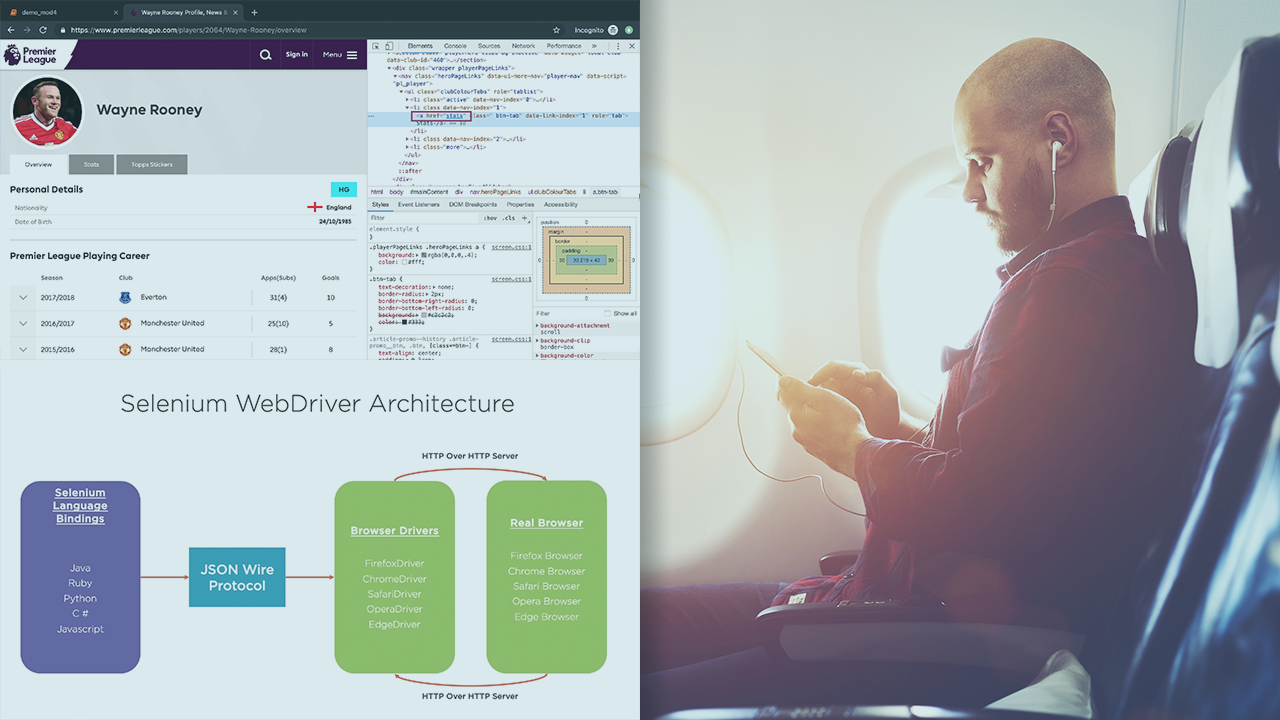

- Learn how to use PySpark to process data in a data lake in a structured manner. Of course, you must first determine whether PySpark is the best tool for the job.

- capable of explaining what a data platform is, how data gets into it, and how data engineers build its foundations

- capable of ingesting data from a RESTful API into the data platform's data lake via a self-written ingestion pipeline built with Singer's taps and targets

- Explore various types of testing and learn how to write unit tests for our PySpark data transformation pipeline so that we can create robust and reusable components.

- Explore the fundamentals of Apache Airflow, a popular piece of software that enables you to trigger the various components of an ETL pipeline on a time schedule and execute tasks in a specific order.

Syllabus :

- Ingesting Data

- Creating a data transformation pipeline with PySpark

- Testing your data pipeline

- Managing and orchestrating a workflow